Anthropic has rapidly expanded its Claude model family, and with the release of Claude Opus 4.6 on February 5, 2026, the company now offers one of the most competitive AI lineups in the industry. From the lightning-fast Haiku to the powerhouse Opus, each model is engineered for a distinct role. This guide breaks down every current Claude model — specs, pricing, benchmarks, and when to use each one.

Claude Opus 4.6: The New Flagship

Released on February 5, 2026, Claude Opus 4.6 is Anthropic’s most capable model to date. It delivers record-breaking performance in coding, agentic tasks, and long-context reasoning — positioning it as a direct competitor to OpenAI’s GPT-5.2 and Google’s Gemini 3 Pro.

Key Specifications

- Context Window: 200K tokens standard, up to 1M tokens in beta

- Max Output: 128,000 tokens

- Input Types: Text and images

- API Pricing: $5 / 1M input tokens, $25 / 1M output tokens

- Long Context Pricing (>200K): $10 / 1M input, $37.50 / 1M output

- Model ID: claude-opus-4-6

Benchmark Performance

Opus 4.6 sets new records across multiple industry benchmarks:

- SWE-bench Verified: 80.8% — among the highest scores for any model

- Terminal-Bench 2.0: 65.4% — the highest score ever recorded on this agentic coding evaluation

- Humanity’s Last Exam: 53.1% — first place among all models

- BrowseComp: 84% — first place

- GPQA Diamond: 91.3%

- ARC-AGI v2: 68.8%

- BigLaw Bench: 90.2% — highly relevant for legal and compliance teams

- OSWorld: 72.7% for agentic computer use

- AIME 2025: 99.79%

On GDPval-AA, which measures real-world professional tasks in finance and legal workflows, Opus 4.6 reaches an Elo rating of 1606 — a 144-point lead over GPT-5.2.

New Features

- Adaptive Thinking: Claude dynamically decides when and how much to think, with effort levels from low to max. At the default high level, Claude will almost always engage in extended reasoning.

- Agent Teams: A new research preview feature in Claude Code that enables multiple AI agents to work simultaneously on different aspects of a coding project, coordinating autonomously.

- Compaction API: Automatic server-side context summarization, enabling effectively infinite conversations by summarizing earlier context as the window fills.

- Fast Mode: Up to 2.5x faster output generation at premium pricing ($30 / $150 per MTok) — same model, faster inference.

Claude Opus 4.5: The Predecessor

Released on November 24, 2025, Claude Opus 4.5 was Anthropic’s flagship before the 4.6 upgrade. It remains available and shares the same pricing as Opus 4.6 ($5 / $25 per MTok). Opus 4.5 was released under AI Safety Level 3 (ASL-3) protections, making it the first commercially deployed model at that safety tier.

Opus 4.5 represented a 67% cost reduction over the previous generation (Opus 4.1 at $15 / $75), while delivering superior performance across all benchmarks.

Claude Sonnet 4.5: The Production Workhorse

Released on September 29, 2025, Claude Sonnet 4.5 is designed as the primary production model — delivering 90-95% of Opus’s performance at a fraction of the cost.

- Context Window: 200K standard, up to 1M tokens in beta

- Max Output: 64,000 tokens

- API Pricing: $3 / 1M input, $15 / 1M output

- Long Context Pricing (>200K): $6 / 1M input, $22.50 / 1M output

- SWE-bench Verified: 77.2%

- Safety Level: ASL-3

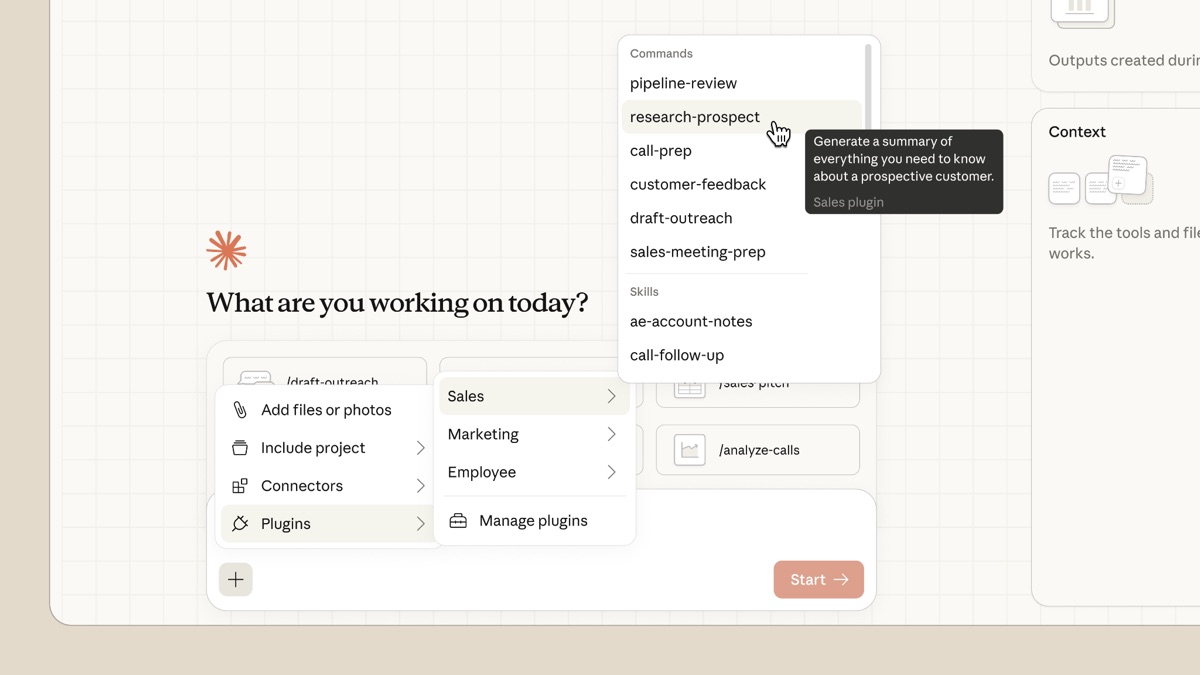

Sonnet 4.5 excels as an orchestrator in multi-agent systems — it can break complex problems into multi-step plans and delegate subtasks to faster models like Haiku. It can maintain autonomous operation for over 30 hours on complex tasks, making it ideal for long-running agent workflows.

Claude Haiku 4.5: Speed and Cost Efficiency

Released on October 15, 2025, Claude Haiku 4.5 is Anthropic’s fastest and most affordable model — achieving 90% of Sonnet 4.5’s performance at just 20% of the cost.

- Context Window: 200,000 tokens

- Max Output: 64,000 tokens

- API Pricing: $1 / 1M input, $5 / 1M output

- SWE-bench Verified: 73.3%

- Safety Level: ASL-2

Haiku 4.5 supports extended thinking and is commonly used for customer support, real-time interactions, classification tasks, rapid prototyping, and as a fast sub-agent in multi-agent systems. Its SWE-bench score of 73.3% puts it within five percentage points of Sonnet 4.5 — remarkable for a model at one-third the cost.

Full Model Comparison Table

| Model | Context Window | Max Output | Input Price (per 1M) | Output Price (per 1M) | Best For |

|---|---|---|---|---|---|

| Opus 4.6 | 200K (1M beta) | 128K | $5 | $25 | Hardest tasks, coding, agentic work |

| Opus 4.5 | 200K (1M beta) | 128K | $5 | $25 | Complex reasoning, multi-step tasks |

| Sonnet 4.5 | 200K (1M beta) | 64K | $3 | $15 | Production workloads, orchestration |

| Haiku 4.5 | 200K | 64K | $1 | $5 | Speed, cost efficiency, sub-agents |

| Opus 4.1 (legacy) | 200K | 32K | $15 | $75 | Legacy — superseded by 4.5 series |

Cost Optimization Features

Anthropic offers several ways to reduce API costs significantly:

- Batch API: 50% discount on both input and output tokens for asynchronous, non-urgent workloads (e.g., Sonnet 4.5 drops to $1.50 / $7.50 per MTok)

- Prompt Caching: Up to 90% savings on repeated content. Cache reads cost just $0.50 per MTok for Opus models, with a 25% surcharge on initial cache writes.

- Extended Thinking: Available on all 4.5+ models. Thinking tokens are billed at standard output rates — no separate pricing tier.

Which Claude Model Should You Choose?

- For the hardest problems: Opus 4.6 — record-breaking coding, legal analysis, and agentic performance

- For production workloads: Sonnet 4.5 — 90-95% of Opus quality at 60% of the cost

- For speed and volume: Haiku 4.5 — fastest model, ideal for classification, routing, and real-time apps

- For multi-agent systems: Combine Sonnet 4.5 (orchestrator) with Haiku 4.5 (executor) for optimal cost-performance

- For maximum accuracy on critical tasks: Opus 4.6 with extended thinking at max effort

Availability

All current Claude models are available through the Anthropic API, Amazon Bedrock, and Google Vertex AI. Consumer access is available through claude.ai, with the Pro plan ($20/month) offering higher usage limits and priority access to the latest models. All models support text and image input, multilingual capabilities, and tool use.

The Bottom Line

Anthropic’s Claude lineup in 2026 is built around a clear tiered strategy: Haiku for speed, Sonnet for production, and Opus for maximum capability. The 4.5/4.6 generation delivers a 67% cost reduction over previous models while achieving dramatically better benchmarks across the board. With Opus 4.6 leading on coding evaluations (Terminal-Bench 2.0, SWE-bench) and professional task benchmarks (GDPval, BigLaw Bench), Claude has established itself as a top-tier choice for developers and enterprises building AI-powered applications.