Google’s Gemini 3 family represents a generational leap in AI capability. With Gemini 3 Pro topping the LMArena leaderboard and Gemini 3 Flash delivering Pro-grade intelligence at a fraction of the cost, Google has assembled one of the most compelling model lineups of 2026. This comprehensive guide covers every current Gemini model — performance benchmarks, pricing, context windows, and practical recommendations.

Gemini 3 Pro: The Reasoning Powerhouse

Released on November 18, 2025, Gemini 3 Pro is Google’s most capable model, engineered for PhD-level reasoning, complex mathematics, and multimodal understanding. It currently holds the #1 position on the LMArena leaderboard with a breakthrough Elo score of 1501.

Key Specifications

- Context Window: 1M tokens standard, up to 2M tokens at higher tiers

- Max Output: 64,000 tokens

- Knowledge Cutoff: January 2025

- Input Types: Text and images (multimodal)

- API Pricing: $2–4 / 1M input tokens (varies by context length)

- Dynamic Thinking: Enabled by default for step-by-step reasoning

Benchmark Performance

- LMArena Elo: 1501 — #1 on the leaderboard

- GPQA Diamond: 91.9% — PhD-level reasoning

- Humanity’s Last Exam: 37.5% (without tools)

- AIME 2025: 100% with code execution, 95% without tools

- MathArena Apex: 23.4% — new state of the art, 20x improvement over previous versions

- ARC-AGI-2: 31.1% — nearly double GPT-5.1’s 17.6%

- SWE-Bench: 76.2%

- LiveCodeBench Pro: Elo 2,439 — 200+ points ahead of GPT-5.1

- MMMU-Pro: 81.0% — 5-point lead in multimodal understanding

- Video-MMMU: 87.6%

- MMMLU: 91.8% multilingual Q&A

Gemini 3 Pro retains the largest native context window among frontier models at 2M tokens and leads on visual reasoning benchmarks (MMMU-Pro), making it the top choice for tasks that involve processing images, videos, and extremely long documents.

Gemini 3 Flash: Frontier Intelligence at Flash Speed

Gemini 3 Flash is the standout release of the Gemini 3 family — a model that delivers Pro-grade reasoning at dramatically lower cost and 3x faster speed. It has become the default model in the Gemini app for all users globally.

Key Specifications

- Context Window: 1M tokens

- Input Types: Text, images, audio

- API Pricing: API Pricing: $0.50.50 / 1M input tokens, $3 / 1M output tokens

- Audio Input: $1 / 1M input tokens

- Speed: 3x faster than Pro, 4x faster multimodal analysis than Gemini 2.5 Pro

- Thinking Levels: Minimal (~512 tokens), low, medium, high

Benchmark Performance

- GPQA Diamond: 90.4% — within 1.5% of Pro

- SWE-bench Verified: 78% — actually surpasses Pro’s 76.2% in agentic coding

- Humanity’s Last Exam: 33.7% (without tools)

Flash is built on Pro’s transformer-based sparse mixture-of-experts (MoE) architecture. While it may house over a trillion parameters, it activates only 5 to 30 billion per inference — explaining how it matches Pro’s intelligence while running significantly faster. On the Artificial Analysis Intelligence Index, Flash ranks third overall, trailing only Gemini 3 Pro and GPT-5.2, while offering the highest intelligence-per-dollar ratio of any model currently available.

Gemini 3 Deep Think: Maximum Reasoning

Gemini 3 Deep Think is not a separate model but an advanced reasoning mode available on Gemini 3 Pro. When activated, it enables extended step-by-step thinking for the hardest problems — trading speed for substantially improved accuracy.

Deep Think Benchmark Improvements Over Standard Pro

- GPQA Diamond: 93.8% (vs. 91.9% standard)

- Humanity’s Last Exam: 41.0% (vs. 37.5% standard)

- ARC-AGI-2: 45.1% with code execution — unprecedented performance on novel problem-solving

Deep Think is available to Google AI Ultra subscribers. Responses take longer as the model reasons through complex chains, but the payoff is significant — particularly for spatial reasoning, logic-heavy tasks, and problems the model has never encountered during training.

Legacy Models: Gemini 2.5

While the Gemini 3 family has taken center stage, Gemini 2.5 Flash remains available as the default model for free-tier Gemini users. It offers solid baseline performance at lower cost, though it has been comprehensively surpassed by Gemini 3 Flash across all benchmarks.

Gemini 2.5 Pro is still accessible but is no longer recommended for new projects, as Gemini 3 Flash alone outperforms it while running 3x faster.

Full Model Comparison Table

| Model | Context Window | Input Price (per 1M) | Output Price (per 1M) | Speed | Best For |

|---|---|---|---|---|---|

| Gemini 3 Pro | 1M–2M | $2–4 | Varies | Standard | Hardest reasoning, 2M context, research |

| Gemini 3 Pro + Deep Think | 1M–2M | $2–4 | Varies | Slow | Maximum accuracy on novel problems |

| Gemini 3 Flash | 1M | $0.50 | $3 | 3x faster than Pro | 95% of use cases, best value |

| Gemini 2.5 Flash | 1M | Lower | Lower | Fast | Free tier, simple tasks |

Subscription Tiers

Google offers three consumer access tiers for Gemini:

- Free: Access to Gemini 2.5 Flash as default, with 5–10 daily uses of Gemini 3 Pro

- Google AI Pro: Full access to Gemini 3 Flash and Pro, including advanced multimodal reasoning and Workspace integration

- Google AI Ultra: Highest access tier — unlocks Deep Think mode, Deep Research, maximum usage limits, and priority access to new features

Flash vs. Pro: Which Should You Use?

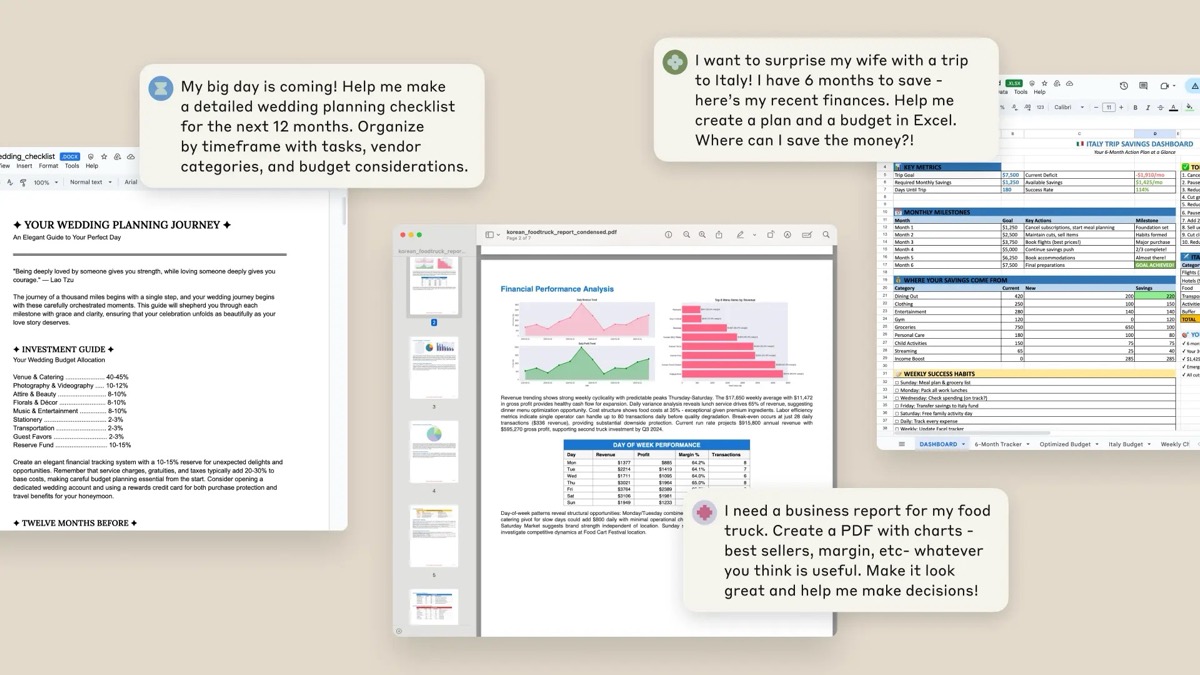

For most users and developers, the answer is straightforward: use Flash unless you have a specific reason to use Pro. Here’s when Pro is worth the premium:

- You need the 2M token context window for extremely large documents or codebases

- You need Deep Think mode for the hardest reasoning problems

- You require the absolute highest accuracy on PhD-level reasoning tasks

- You’re working on novel problems where the ~1.5% benchmark gap matters

For everything else — and Google estimates this covers 95% of use cases — Flash delivers Pro-grade intelligence at 75% lower cost and 3x faster speed. A hybrid approach using Flash for routine work and Pro for critical analysis optimizes spending while maintaining maximum capability.

How Gemini 3 Compares to the Competition

In the three-way race between Google, OpenAI, and Anthropic:

- Gemini 3 Pro leads on visual reasoning (MMMU-Pro), multilingual tasks (MMMLU), and holds the largest context window at 2M tokens

- GPT-5.2 edges ahead on graduate-level reasoning (GPQA Diamond) and agentic tool use

- Claude Opus 4.6 dominates on coding benchmarks (Terminal-Bench 2.0, SWE-bench) and professional task evaluations

- Gemini 3 Flash offers the best intelligence-per-dollar ratio of any frontier model

The Bottom Line

Google’s Gemini 3 family sets a new standard for what’s achievable at each price point. Gemini 3 Flash, in particular, has redefined the value proposition — delivering frontier-class performance at $0.50 per million input tokens. For developers building on the API, Flash is the clear starting point. For researchers, data scientists, and enterprises tackling the hardest problems in reasoning, multimodal understanding, or processing massive documents, Gemini 3 Pro with Deep Think remains unmatched in several key areas. The Gemini 3 generation represents Google’s strongest competitive position in the AI race to date.