OpenAI just made its most direct pitch yet to the C-suite. On April 8, 2026, the company published a detailed look at what it’s calling the next phase of enterprise AI — and for once, this isn’t just a product announcement dressed up in strategy language. It’s a genuine signal that the way companies deploy AI is being restructured from the ground up. ChatGPT Enterprise, OpenAI Codex, the Frontier model tier, and a new push toward company-wide AI agents are all part of a coordinated move that OpenAI is betting its commercial future on.

How We Got Here: The Enterprise AI Backstory

OpenAI’s enterprise story started quietly. ChatGPT launched in late 2022 and immediately became a consumer phenomenon, but businesses were cautious. Data privacy questions, unpredictable outputs, and the lack of any real administrative control made it hard for IT departments to say yes. ChatGPT Enterprise arrived in August 2023 as a direct response — offering no training on company data, SOC 2 compliance, admin controls, and longer context windows.

That was version one of the enterprise pitch. It worked, sort of. OpenAI reported that ChatGPT Enterprise crossed one million business users in early 2025, but adoption was still concentrated in specific functions — marketing copy, internal knowledge search, code review. The usage pattern looked more like a productivity tool than a core business system.

The problem wasn’t the product. It was the mental model. Companies were treating AI like a smarter search engine. OpenAI wants them to treat it like a workforce layer. That shift is what this announcement is really about.

For context on how OpenAI has been positioning itself more broadly, our earlier coverage of OpenAI’s industrial policy blueprint gives a useful window into the company’s longer-term thinking on AI and economic structure.

What’s Actually in the Announcement

Let’s break down the concrete pieces, because there’s a lot being bundled together here.

The Frontier Tier

Frontier is OpenAI’s new top-end enterprise tier, sitting above standard ChatGPT Enterprise. Think of it as a dedicated relationship rather than a software subscription. Frontier customers get early access to new models, custom model fine-tuning options, higher rate limits, dedicated support teams, and deeper integration pathways into their existing infrastructure.

OpenAI hasn’t published a public price for Frontier — it’s invitation-only and negotiated directly, which tells you the target customer is Fortune 500, not a 50-person startup. This is OpenAI going after the same accounts that Microsoft’s Azure OpenAI Service has been quietly locking up for two years. The difference: Frontier is sold as an OpenAI relationship, not a Microsoft one.

ChatGPT Enterprise Upgrades

The core ChatGPT Enterprise product is getting meaningful updates. Key additions include:

- Deeper integration with enterprise data sources through improved connectors and retrieval systems

- Multi-model access within a single Enterprise account — so teams can run o3 for complex reasoning tasks and GPT-4o for faster, cheaper everyday queries without managing separate contracts

- Enhanced admin controls including more granular permission settings per department or team

- Expanded audit logs and compliance tooling, targeting regulated industries like finance and healthcare

- Native support for structured output formats that plug directly into enterprise workflows

The multi-model angle is smarter than it might first appear. Right now, enterprise buyers often have to make a binary choice about which model to standardize on. OpenAI is removing that constraint, which makes the platform stickier and harder for Google or Anthropic to displace with a single competing model.

Codex’s Growing Role

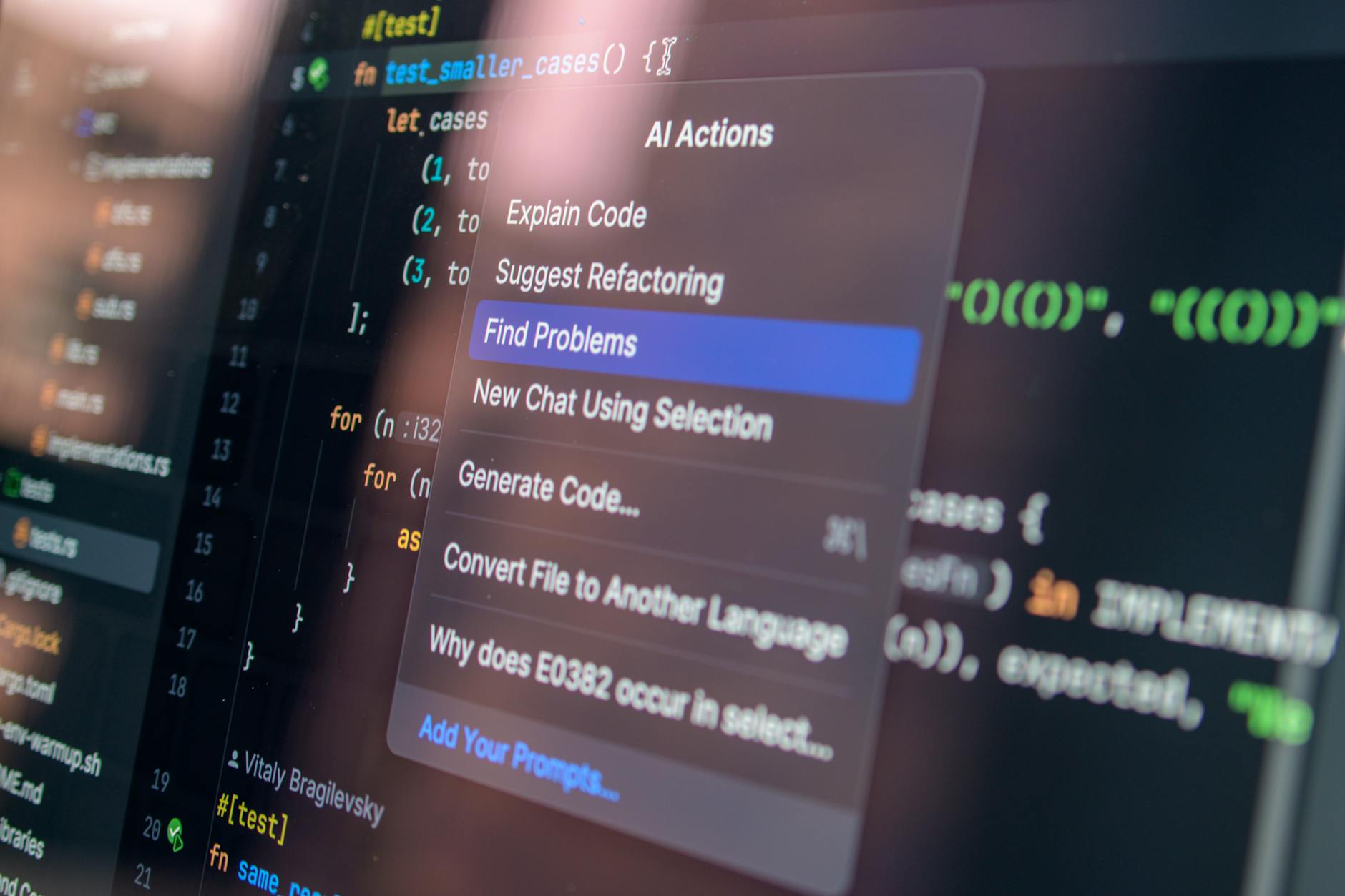

OpenAI Codex is being positioned as a central pillar of the enterprise story, not just a developer tool. OpenAI is pushing Codex as the engine for automating software-adjacent work across the business — not just writing code, but generating reports, handling data pipelines, and building internal tools without dedicated engineering bandwidth.

We covered the pay-as-you-go Codex pricing shift earlier this year, which made it accessible to smaller teams. Now OpenAI is also making it a flagship enterprise offering with volume pricing and tighter integration into the broader ChatGPT Enterprise environment.

Company-Wide AI Agents

This is the most ambitious piece. OpenAI is describing a future — already beginning for some Frontier customers — where AI agents operate across an entire company’s workflows, not just within individual users’ sessions. These agents can be assigned to specific functions: finance, HR, operations, customer support. They have persistent memory, defined scopes of authority, and can hand tasks between each other.

The framing OpenAI is using is deliberate: not “AI assistant” but “AI colleague” or even “AI employee.” That’s a significant rhetorical shift, and it has real implications for how companies will need to think about accountability, oversight, and what work actually means in this context.

How This Stacks Up Against the Competition

Google’s Enterprise Play

Google is not sitting still. Gemini for Workspace is deeply embedded in Docs, Gmail, Meet, and Sheets, giving it a distribution advantage that OpenAI simply can’t match from scratch. If your company already runs on Google Workspace, the friction to use Gemini AI features is nearly zero.

But there’s a gap. Google’s enterprise AI feels bolted onto productivity tools. OpenAI’s enterprise AI is trying to be the layer that sits above all tools. These are different bets, and it’s not obvious yet which one enterprise buyers prefer. The fact that Google has had to explicitly address privacy concerns around Gemini in Gmail suggests that enterprise trust isn’t automatic, even with distribution.

Anthropic’s Position

Anthropic is making serious inroads in regulated industries, particularly finance and law, where Claude’s emphasis on careful, cited reasoning resonates. Anthropic doesn’t have a direct equivalent to the Frontier tier yet, but its enterprise contracts are growing fast. The company is also making aggressive moves in security — worth noting given the scale of vulnerabilities AI systems can now identify in enterprise environments.

Microsoft’s Role

Here’s the awkward part: Microsoft is both OpenAI’s biggest investor and its biggest distribution partner through Azure OpenAI Service — and increasingly, a kind of competitor. Copilot for Microsoft 365 is the dominant AI product in enterprise right now by sheer install base. OpenAI’s direct enterprise push, especially Frontier, is a signal that the company wants relationships that don’t run through Redmond. That tension will be worth watching.

What This Means for Businesses Considering Enterprise AI

If you’re an enterprise buyer evaluating this, here’s an honest read of the situation:

- If you’re already on Azure OpenAI Service, this announcement mostly affects the ceiling of what’s possible, not your day-to-day. The Frontier tier is for organizations that want a direct OpenAI relationship with more control and earlier model access.

- If you’re evaluating ChatGPT Enterprise for the first time, the multi-model access and improved admin controls are genuine improvements over where the product was 18 months ago. It’s a more mature platform.

- If you’re interested in AI agents, be clear-eyed that company-wide agentic deployments are still early. The technology is real, but the organizational and governance frameworks most companies need to support autonomous AI agents are lagging behind the products.

- If you’re a smaller business, the Frontier tier isn’t for you. ChatGPT Enterprise starts to make sense at roughly 150+ seats. Below that, ChatGPT Team or API-based access is the more cost-effective path.

The bigger picture here is that OpenAI is trying to establish itself as an enterprise vendor in the traditional sense — long contracts, dedicated relationships, deep integrations — not just an API provider that companies build on top of. That’s a different business motion, and it requires different trust. The OpenAI Safety Fellowship and the company’s broader transparency efforts are part of building that institutional credibility.

Frequently Asked Questions

What is OpenAI Frontier and who is it for?

Frontier is OpenAI’s top-tier enterprise offering, designed for large organizations that want early model access, custom fine-tuning, and a direct relationship with OpenAI rather than accessing the technology through a cloud provider like Azure. Pricing is negotiated directly and isn’t publicly listed, signaling it targets major enterprises with substantial AI budgets.

How is ChatGPT Enterprise different from ChatGPT Team?

ChatGPT Enterprise is built for larger organizations and includes stronger compliance tooling, SOC 2 Type II certification, higher usage limits, and now multi-model access. ChatGPT Team is a lighter-weight option for smaller groups that want shared workspaces and basic admin controls without the full enterprise stack.

Are AI agents in enterprise settings ready for widespread use?

Some companies are already running production agentic workflows for specific, bounded tasks — customer support triage, data extraction, internal knowledge retrieval. Company-wide agents with broader authority are still in early deployment, and most organizations will need significant internal governance work before scaling that kind of usage.

How does OpenAI’s enterprise push compare to Google Gemini for business?

Google has a distribution advantage through Workspace, but OpenAI is betting on depth over breadth — more powerful models, more flexible deployment, and a direct enterprise relationship. They’re solving different problems: Google makes AI easier to start using, OpenAI is trying to make AI more central to how a company operates at scale.

The honest bet here is that OpenAI’s enterprise ambitions will succeed or fail based on whether companies actually believe AI agents can own consequential workflows — not just assist with them. If the next 18 months produce real case studies of agents driving measurable business outcomes, the Frontier tier will look prescient. If the agentic vision stalls on reliability and accountability questions, OpenAI will have a very expensive sales motion to walk back. I wouldn’t bet against them, but the proof still has to arrive.