OpenAI’s Codex app just got a serious upgrade — and if you’ve been treating it as a glorified autocomplete tool, that mental model is now officially outdated. The April 2026 update to Codex for macOS and Windows introduces computer use, in-app browsing, image generation, persistent memory, and plugin support. Taken together, these aren’t incremental improvements. OpenAI is trying to turn Codex into the primary interface through which developers interact with their entire workflow — not just their code editor.

How Codex Got Here

It’s easy to forget that OpenAI Codex started life as a code-specialized descendant of GPT-3, released back in 2021 and best known as the engine powering GitHub Copilot. That original Codex was purely an API product — no interface, no memory, just a model you called to complete code.

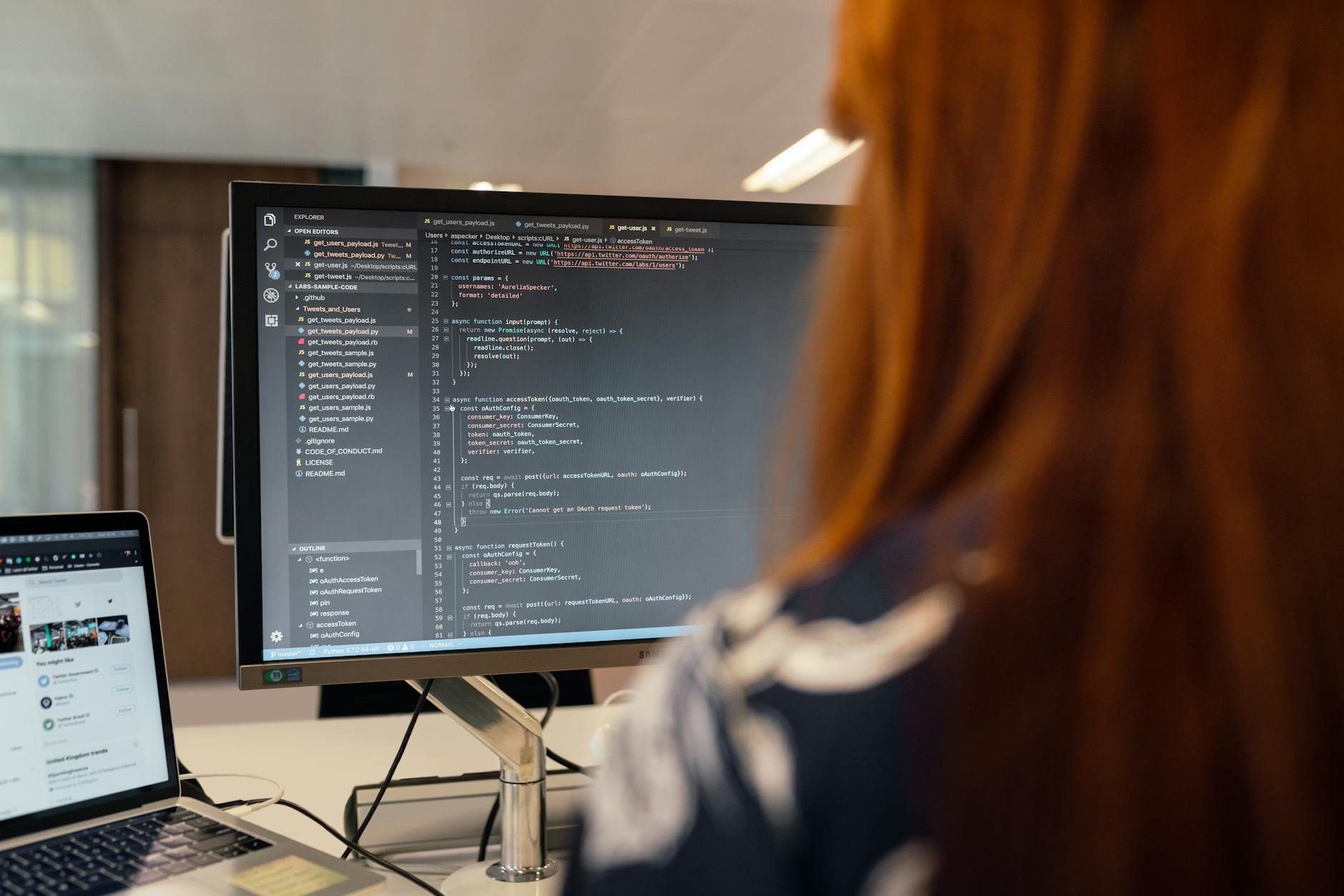

The pivot to a standalone app came later, as OpenAI watched competitors like Cursor and Anthropic’s Claude build rich, opinionated developer environments that went well beyond chat. Cursor, in particular, ate significant mindshare in 2024 and 2025 by embedding AI deeply inside a VS Code fork. OpenAI’s response has been to make Codex a layer that sits above the IDE — something that can orchestrate tasks across your entire machine rather than just within one editor pane.

The timing also makes sense given where OpenAI’s model lineup sits right now. With GPT-5 and the broader agent infrastructure maturing, the company has the underlying capability to support the kind of multi-step, multi-tool workflows this update requires. This isn’t a feature announcement you could have shipped 18 months ago without it feeling half-baked.

What’s Actually New in This Update

Let’s go through the five major additions in plain terms, because each one carries different implications.

Computer Use

Computer use lets Codex control your desktop — clicking, typing, navigating applications — in the same way Anthropic’s Claude demonstrated with its own computer use feature late last year. For developers, this means Codex can now run your test suite, open your browser, check a deployment dashboard, or interact with a GUI tool that doesn’t have an API. It’s the difference between an assistant that writes code and one that can actually execute a workflow end to end.

In-App Browsing

Codex can now browse the web from inside the app. This sounds small, but it’s practically useful in ways that matter: pulling documentation for a library you’re using, checking a Stack Overflow thread, or verifying that an API endpoint is still live. You’re not copy-pasting between windows anymore. The context just flows into your coding session.

Image Generation

This one’s a bit of a wildcard. Image generation inside Codex is probably aimed at full-stack developers who need quick mockups, placeholder assets, or UI component sketches without spinning up a separate design tool. It won’t replace Figma, but it might save you the round-trip to a separate image tool when you just need something rough and fast.

Memory

Persistent memory in Codex means the app can retain information about your projects, your preferences, and your past interactions. This is genuinely important. One of the persistent frustrations with AI coding assistants is that every new session starts from zero — you re-explain your stack, your conventions, your constraints. Memory changes that equation. Over time, Codex should get better at understanding how you specifically work.

Plugins

The plugin system opens Codex to third-party integrations. Think Jira, Linear, GitHub, Slack, Datadog — the tools developers already live in. This is probably the feature with the most long-term leverage, because it means OpenAI doesn’t have to build every integration themselves. If the plugin ecosystem grows the way ChatGPT plugins initially did (before OpenAI pivoted to GPTs), this could extend Codex’s reach significantly.

Here’s a quick summary of what’s shipping:

- Computer use — desktop control for end-to-end task execution

- In-app browsing — web access without leaving the coding environment

- Image generation — quick asset creation for UI and mockup work

- Memory — persistent context across sessions and projects

- Plugins — third-party integrations with developer tools

The app is available now on macOS and Windows. Pricing details haven’t been prominently disclosed alongside this announcement, but Codex currently falls under the ChatGPT Pro and Team subscription tiers, with API access priced separately through OpenAI’s platform.

What This Actually Changes for Developers

Here’s the thing: individual features are fine, but the more interesting question is whether Codex can become the thing developers reach for first — before they open a browser tab, before they switch to a terminal, before they check Jira. That’s the real ambition visible in this update, and it’s a much harder problem than shipping any individual capability.

The computer use addition is where I think the most genuine productivity gains live, at least in the short term. Right now, even with good AI coding tools, there’s a lot of manual orchestration — you take the code suggestion, drop it in your editor, run tests, interpret the output, come back. Computer use collapses several of those steps. You describe what you want done, and Codex can actually run through the workflow. That said, how reliably it handles complex, multi-step desktop interactions will define whether this becomes a daily driver or a demo feature.

The memory capability deserves more attention than it’s probably getting. Developer context is rich and specific — you’re working in a particular codebase, with particular conventions, dealing with particular constraints your team has established. An AI that retains that context isn’t just more convenient; it’s qualitatively more useful. This is actually an area where OpenAI’s agent infrastructure investments pay off directly, because memory at this level requires more than a simple key-value store.

On the competitive side, this update puts Codex more directly in competition with Cursor and with Anthropic’s Claude in its coding-focused modes, but also with broader developer agent platforms. Microsoft’s GitHub Copilot — which still uses OpenAI models under the hood — is the interesting case here. There’s a version of the future where Codex and Copilot end up competing for the same developer, which would be an odd position for OpenAI to be in with one of its biggest partners. I wouldn’t be surprised if there’s some quiet tension in that relationship as Codex becomes more capable as a standalone product.

The plugin system is the move that could matter most over a 12-to-18-month horizon. If developers start wiring Codex into their CI/CD pipelines, their project management tools, their monitoring dashboards — then Codex stops being an app you open occasionally and starts being infrastructure. That’s a much stickier product. It’s also exactly what integrations like Cloudflare’s Agent Cloud are already gesturing at.

What This Means for Different Users

Not every developer will get the same value from this update. Here’s an honest breakdown:

- Solo developers and indie hackers — The memory and browsing features probably deliver the most immediate value. Less context-switching, more flow.

- Full-stack developers — Computer use plus image generation is an interesting combination for people who move between backend logic and frontend assets regularly.

- Teams — The plugin integrations with project management and communication tools will matter most here, but that depends on which plugins actually ship and how well they work.

- Enterprise developers — Memory raises questions about data handling and privacy that will need clear answers before large organizations adopt it broadly. OpenAI’s security track record, including its GPT-5.4-Cyber work for security teams, will be relevant context here.

How Do I Get Access to the New Codex Features?

The updated Codex app is available on macOS and Windows. Access is tied to ChatGPT Pro and Team subscriptions, with the app downloadable directly from OpenAI’s website. Some features may roll out incrementally rather than all at once.

How Does This Compare to GitHub Copilot?

Copilot lives inside your IDE and is tightly integrated with the editing experience. Codex is positioned as a broader orchestration layer — it can operate across your desktop, not just inside a code editor. They’re complementary in theory, but increasingly overlapping in practice.

Is the Memory Feature Safe for Sensitive Codebases?

OpenAI hasn’t released detailed documentation on how memory data is stored or whether it can be scoped to exclude sensitive information. Enterprise users should check OpenAI’s data processing agreements before enabling memory on confidential projects.

What Plugins Are Available at Launch?

OpenAI hasn’t published a full plugin directory yet. Based on the announcement framing, the plugin system is launching as a platform for third-party developers to build against, so availability will grow over time rather than launching with a complete catalog.

The gap between a capable AI coding assistant and one that developers genuinely trust with complex, real-world workflows is still real — and closing it requires exactly the kind of persistent context, multi-tool orchestration, and environmental awareness this update introduces. Whether Codex executes on that potential will show up in adoption numbers over the next two quarters, and in how quickly the plugin catalog grows beyond the obvious first-party integrations.